IDF Spring 2005 Day 1 - Gelsinger Speaks, nForce4 Intel and more

by Anand Lal Shimpi & Derek Wilson on March 2, 2005 3:00 AM EST- Posted in

- Trade Shows

IAMT, VT, and why should I want Virtualization?

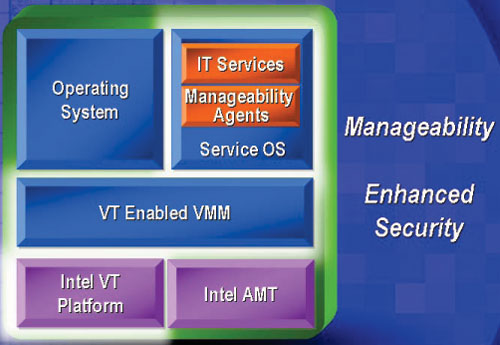

IAMT is Intel's new Active Management Technology. They didn't go into much (any) detail on how it works, but they talked about it as a separate entity on the hardware level that is able to monitor and correct problems on the rest of the system. This enables higher levels of reliability across the Intel platform. This will likely be more interesting to the server administrator than to the desktop customer. From other descriptions of IAMT, we can speculate that IAMT consists of a custom operating system stored in hardware (similar to the BIOS) that allows secure network access and is able to interact with the rest of the system. We know that IAMT will be able to operate regardless of software or hardware state. In other words, having a hard locked computer, dead hard drive, or even being powered down (as long as there is hard power to the system) won't get in the way of IAMT working.

One of the biggest advances Intel is trying to push now is virtualization (with Intel Virtualization Technology: VT). Other than simply adding another way to utilize the parallelism the dual core push will offer, hardware virtualization will allow quite a few new usage models for personal computers.

Hardware virtualization is the ability of the platform to partition hardware and allow software to run as if it had full control of the hardware. Companies like VMware have been building software level technology that attempts to virtualize systems for quite a while, but there are many more advantages to virutalizing at the hardware level.

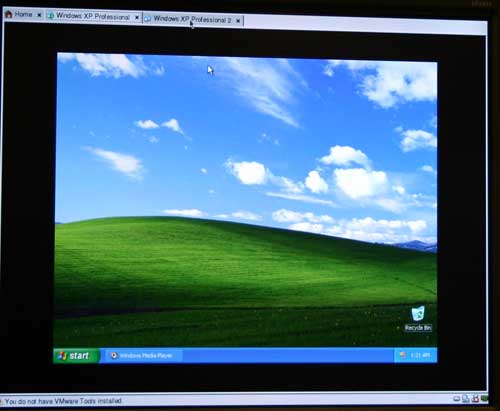

A version of VMware running with Intel VT support

As we have pointed out in past IDF shows, virtualization could allow one single computer to run more than one OS, even mixing Windows and Linux. Multiple people could be using a single system as if it were more than one, provided the processing power were adequate.

Aside from this perspective, Intel also sees security as a major advantage of virtualization. In the past, they have talked about maintaining a dedicated management partition running a standardized installation. This would allow companies to easily fix problems on a PC from a sort of quarantined operating system (that even the computer's everyday user would not have access to).

In order to show the advantage of this, Pat demonstrated what would happen if a computer on a corporate network was infected with a virus. In this demo, the IAMT enabled server that the computers are connected to automatically detects the virus and disconnects the machine from the network. If this happens on a non-virtualized computer, the user must run a virus scanner locally. On the virtualized hardware, Intel is able to disconnect the infected partition and enable the management partition to scan and clean the system. After the management partition has cleaned the virus, the user's partition is able to reconnect to the network virus free. The demo showed all of this happening in the time it took Pat to explain what was going on.

19 Comments

View All Comments

sprockkets - Thursday, March 3, 2005 - link

FDimms, the way we get around having each processor have its own memory controller but still have a lot of slots.Of course the right time for you to enter 64 bit for the desktop is now, cause you managed to kludge it on your processors oh BFD.

Sorry Patty, but the AMD/Linux crowd didn't need to wait on the Wintel crowd for 64 bit.

Viditor - Thursday, March 3, 2005 - link

mickyb - "How is this any different than multi CPU SMP? It isn't, except for compressing them to a smaller space"I agree, at least for the Intel model. The AMD dual core is quite different however, in that the 2 cores are connected locally, and the MOESI protocol AMD uses allows for easy cache snooping.

mickyb - Wednesday, March 2, 2005 - link

#16 My statement was in context of the performance issue, not cost. The graphs imply that multi-core is the savior, when we have been experiencing what multi-cpu will do. Any graph that implies "exponential" growth for multi-core vs. single core is just a lie. I have been creating tools that analyze system performance for a while. It is far from the truth. It is still SMP.JarredWalton - Wednesday, March 2, 2005 - link

#9: "How is this any different than multi CPU SMP? It isn't, except for compressing them to a smaller space. SMP has its problems as well and the number of CPUs does not create an exponential graph like Intel is implying."Actually, there is one major difference: one socket is sufficient. Designing motherboards with two CPU sockets increases costs a lot, and so the SMP market in the desktop space is extremely small. There are so many applications that *could* use multiple threads that don't, mostly because programmers would end up spending tons of effort in improving performance on a small percentage of systems.

Just like the move to 64-bits will have more benefits in the future rather than in the short term, multi-core is looking to the future rather than the present. Software will have to be coded properly, but once that is done, multi-core will start to give us a lot of improvements in performance. Now that programmers have an incentive to support threading (probably almost all CPUs sold by late 2007 are going to be SMP), they will spend the time.

Viditor - Wednesday, March 2, 2005 - link

Cygni - "Im also wondering whether Intel will allow the Nforce4 to use the 1066fsb?"Good question...

I would ASSUME that the answer to this is yes..."In for a penny, in for a pound" as they say. Considering all the trouble Intel has been having with 3rd party developers lately, I would assume that they will support Nvidia completely (if not, why support them at all?).

Cygni - Wednesday, March 2, 2005 - link

I thought the Pressler info was pretty shocking too, Viditor... especially considering its slated to replace the single die PD's. Wouldnt two phsyical cores over FSB be far slower? Really strange.Im also wondering whether Intel will allow the Nforce4 to use the 1066fsb?

johnsonx - Wednesday, March 2, 2005 - link

We all said it, and I think recent events and comments at IDF prove it beyond a reasonable doubt: Intel and Microsoft were in cahoots on XP 64-bit all along. XP x64 wasn't ever going to be released before Intel had 64-bit capable P4's and even Celerons ready to go.Viditor - Wednesday, March 2, 2005 - link

I hadn't realised how very different Intel's dual-core is to AMD's until now...Just this one line:

"The two cores in Pressler are totally independent, meaning that they must communicate with each other over the external front side bus and not over any internal bus"

That will be a HUGE latency problem when compared to AMD's dual-core! It takes 1 clock for AMD to communicate from one core to another because of Direct Connect (the on-die "switcher" between cores), I would guess that Intel will require at least 3-5 clocks (based on it taking 6-8 clocks for their SMP)...

skiboysteve - Wednesday, March 2, 2005 - link

even if intel did implement x86-64 at the "right time" at the launch of Win64, how is this the right time? Anyone who wants to use Win64 that intel now harks as the future, will have to buy a new chip, while A64 users already have everything they needed.I dont even have an athlon64 but its just silly to say you are at the forefront of some new technology (64bit) when everyone has to go buy a new CPU to use it, amd had it right by equiping users before the "right time" so they were prepared.

Anyway. Yeah FBDIMMs look tight, so does rambus, the DDRx shit is so lame. by the time they get DDR2 up and running they find 100 ways to do it better and no one wants DDR2 anymore, its stupid.

and BTX is a joke

sphinx - Wednesday, March 2, 2005 - link

I agree with mickyb. I am more interested in the FBDIMM than NF4 for Intel and dual cores.